Using Neuroimaging Data for Exploring Conversational Engagement in Human-Robot Interaction

Objective

This project will address interactions with novel conversational systems, such as social robots and digital assistants. These technologies can assist people in societal situations such as health care, elderly care, education, public spaces and homes.

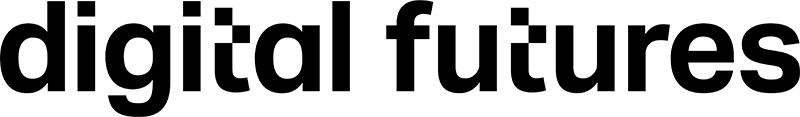

A telepresence system for human-robot interaction will be developed, allowing participants to situate themselves in natural conversations while physically in a functional magnetic resonance imaging scanner. Each participant will interact directly with a human-like robot and other human actors while lying in the scanner.

This neuroimaging experiment will allow us to understand cognitive processes underlying engagement. It will also enable an unheard-of in-depth evaluation and perception of conversational engagement as a user state.

Background

In everyday conversations, a speaker and a listener are involved in a common project that relies on close coordination, requiring each participant’s continuous attention and related engagement. At the same time, additional bystanders might show less engagement in the conversation.

Previous research in human-human and human-robot interaction has identified four types of events establishing engagement involving gesture and speech: (1) joint directed gaze at objects, (2) mutual facial gaze, (3) back-and-forth conversation, (4) short feedback such as nods while the speaker is talking. We will study the underlying neural signatures of conversational engagement.

Crossdisciplinary collaboration

The researchers in the team represent the Department of Intelligent Systems at KTH EECS, the Psychology Department and the Linguistics Department at Stockholm University.

Watch the recorded presentation at the Digitalize in Stockholm 2023 event:

Contacts

André Tiago Abelho Pereira

Researcher at KTH, Co-PI: Using Neuroimaging Data for Exploring Conversational Engagement in Human-Robot Interaction, Digital Futures Faculty

atap@kth.se

Julia Uddén

Assistant Professor at Stockholm University, Co-Pi of project Using Neuroimaging Data for Exploring Conversational Engagement in Human-Robot Interaction at Digital Futures, Digital Futures Faculty

08-16 32 32julia.udden@psychology.su.se